on Posted on Reading Time: 5 minutes

What do Augmented Reality/Virtual Reality (AR/VR), cloud gaming, smart cities, 5G, autonomous vehicles, healthcare sensors, surveillance, and facial recognition all have in common? The need for low-latency connectivity enabled by networks architected with edge computing.

For some service providers, edge computing trials have already started. For others, edge computing plans won’t be formulated for a few years. But, whether they’ve already devised their edge computing strategy or haven’t yet begun, the first question they need to ask themselves is: “What is edge computing?” At Broadband Success Partners, we’ve done just that. We asked this question and seven others of 24 network engineering and commercial services executives at Tier 1 and Tier 2-3 MSOs. Here are their answers and our insights.

The Highlights

- Headends and hub sites are preferred locations for edge equipment—together they were chosen by close to two-thirds of executives;

- Almost 60% of executives view “improved customer experience” or “enablement of new revenue streams” as the most important edge computing driver;

- Cloud gaming is the primary edge computing use case—after video caching;

- Operations to support monetizing new services is the number one edge computing challenge, and

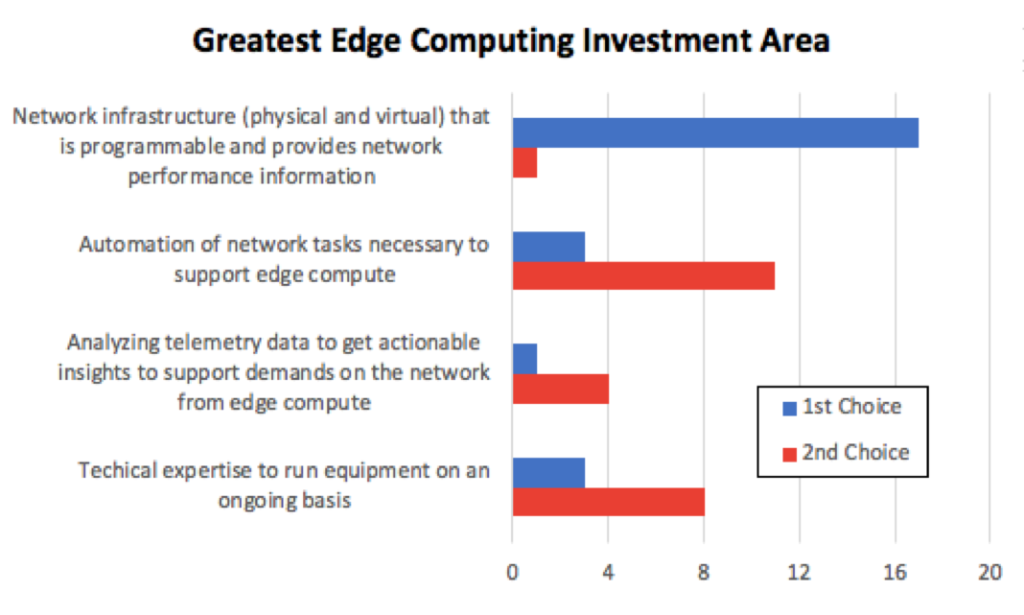

- Cable operators believe programmable infrastructure and network automation are the top investment areas to move to edge computing.

1. What is edge computing?

There’s no single definition. The surveyed cable operators are deploying edge computing (or expect to) in one of three ways:

- According to 43% of those interviewed, “transforming headends and hub sites to mini data centers, or Headend Re-architected as a Data Center (HERD)” is what best describes their edge computing initiatives.

- A third of the executives cited, “Distributing compute and virtualization via Flexible MAC Architecture (FMA).”

- The balance of those interviewed, or 24%, approach it as, “Building new edge sites with compute and storage closer to end customers.”

These varying views are due, in large part, to the individual’s preferred edge computing use cases. For example, if their primary applications are less latency-sensitive, such as video caching or SD-WAN, they skew toward a less distributed compute architecture. In contrast, those thinking in terms of VR/AR, gaming and/or autonomous vehicles with little to no tolerance for latency, will gravitate towards another configuration.

2. Where is edge computing?

Naturally, we next asked this question: Where will edge equipment be located? With many noting that their goal is to get as close to the edge of the network as possible, it’s not surprising that the two top answers were:

- Headends: 33%

- Hub sites: 29%

An interesting split emerges when you view the results by company size. Tier 1 cable operators are more likely to place edge computing hardware in hub sites rather than headends. The opposite holds true for the Tier 2-3s. This difference is due, in part, to the cost of scaling up and out to place edge devices in many hub sites versus fewer headends. This expense needs to be considered relative to the allowable latency. As one executive explained, “Our decision depends on the tipping point of finances and latency tolerance.”

Deployment also depends on where the equipment can more easily reside. As one Tier 2-3 executive noted, “The existing headend facilities have space and power to accommodate edge hardware.” The density of the network could also be a decision variable. In a rural system, with each headend serving relatively few customers, edge hardware could be there—versus a hub site, serving more customers in a suburban system.

The hub site versus headend decision appears to be fluid. For example, one interviewee noted that the two types of locations are interchangeable. A few others said they’re starting with less costly headend deployments and will then migrate outwards to hub sites at a later date.

3. What’s driving your company to edge computing?

Over half of the executives noted either “improved customer experience” or “the enablement of new revenue streams” as the most important driver for edge computing in their company. Among the 29% who indicated “improved customer experience,” here’s the rationale some gave:

- Edge computing implementation must serve the customer’s needs.

- Customer satisfaction with lower latency for gaming and video optimization is important.

As to why 24% of those interviewed noted the “enablement of new revenue streams” as the top driver, here are a few of the reasons:

- Due to financial priorities; tasked with new revenue growth.

- For HFC to go to 10 Gbit/s, we need a distributed architecture; only way to achieve this.

The two top drivers align nicely in that new revenue streams can only be created if the customer is satisfied with the new services.

4. What is the largest opportunity area for your company with edge computing?

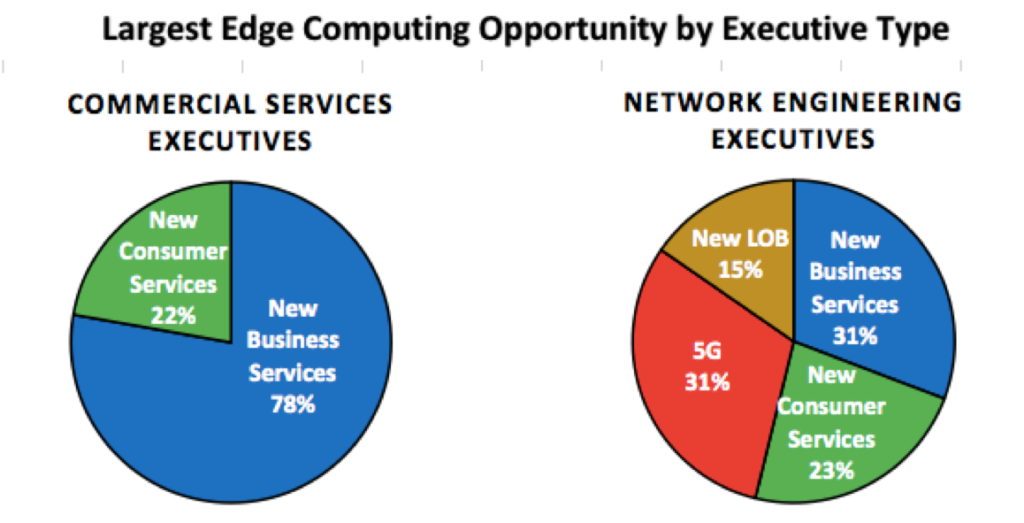

Half of the executives see “new business services” as the greatest edge computing opportunity. As you see here, almost 80% of the Business Services executives we interviewed chose “new business services.” Network engineering executives split between “new business services” and “enabling MSOs to play a larger role in 5G,” with each indicated by 31% of the interviewees.

5. Which edge computing use case do you expect will develop next (or further)?

Pandemic-triggered sheltering-at-home caused an explosion in online gaming—an application that relies on a low-latency network with compute equipment close to the edge. In fact, cloud-based gaming is the number-one edge computing use case according to our interviewees.

Why? One executive said that, “these are delay-sensitive applications, which benefit most from edge computing.” Another noted the growth in offerings by Google, Facebook, Shadow, and others.

After cloud gaming, healthcare sensors & telemetry (massive IoT) and surveillance & physical security ranked as the primary use cases.

6. What is the most significant challenge to supporting edge computing?

Close to half of the executives cited operations to support monetizing new services as the biggest impediment.

Why? According to one of the executives, “Edge computing is a new paradigm driving new workflows.” Another respondent highlighted their “inflexible OSS/BSS systems—preventing an agile approach to innovation.”

7. Where is the investment greatest to progress edge computing?

Far and away, programmable infrastructure is the number one investment area. Network automation ranks number two. As to why programmable infrastructure is the primary funding area, this answer from one executive was typical: “We need to rethink our network topology. To move to a distributed architecture, a massive number of new elements go into the infrastructure and must integrate with the existing hardware.”

8. Does your company have a strategy to incorporate edge computing?

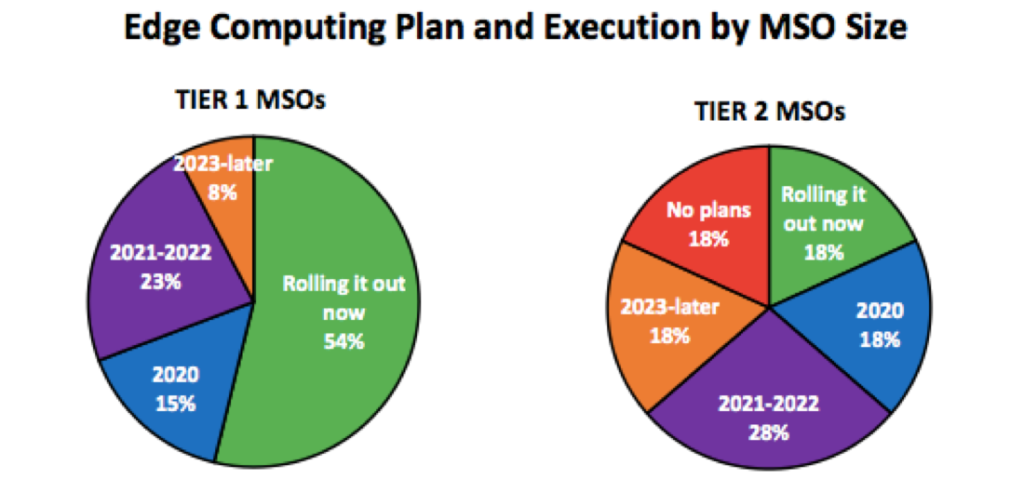

Over half of the executives said their edge computing plans are rolling out now. Another 25% said they will have a plan by 2022.

The differences in timing by MSO size are striking: Tier 1 executives are clearly planning and executing their edge computing strategies faster than their Tier 2 counterparts. In fact, almost twice as many Tier 1 MSOs are rolling out their plans now. What’s also interesting is that 18% of Tier 2s are not planning to develop an edge computing strategy.

In Summary

What are the implications of this research for service providers? In devising or reevaluating your edge computing strategy, start by prioritizing the use cases you envision will be the most popular. Understand the latency requirements needed to deliver an excellent customer experience. Where will you place edge equipment to achieve this experience while investing at an acceptable level? Striking the right balance today and in the future is critical.

Learn More

For a copy of the Cable Operators & Edge Computing research report, visit https://www.broadbandsuccess.com/cable-operators-edge-computing/. Register for Episode 4 of the MEF Infinite Edge Series – Edge Computing: Bringing the Cloud Down-to-Earth, 14 April 2021. Details about this episode are coming soon!